Kevin Qinghong LinPh.D. Student

Show Lab |

Photo taken on Rottnest Island. |

Biography

My research focuses on Video Understanding and Language Models, aiming to develop assistants to streamline human tasks.

News

-

How far can AI help humans solve computer tasks? Check out latest works in Awesome-GUI-Agent

- 2024 July: MovieSeq got accepted by ECCV 2024.

- 2024 Jun: Check out our new work on GUI automation: VideoGUI and GUI Narrator.

- 2024 Jun: EgoVLP received Egocentric Vision (EgoVis) Distinguished Paper Award.

- 2024 May: Recognized as CVPR 2024 Outstanding Reviewers.

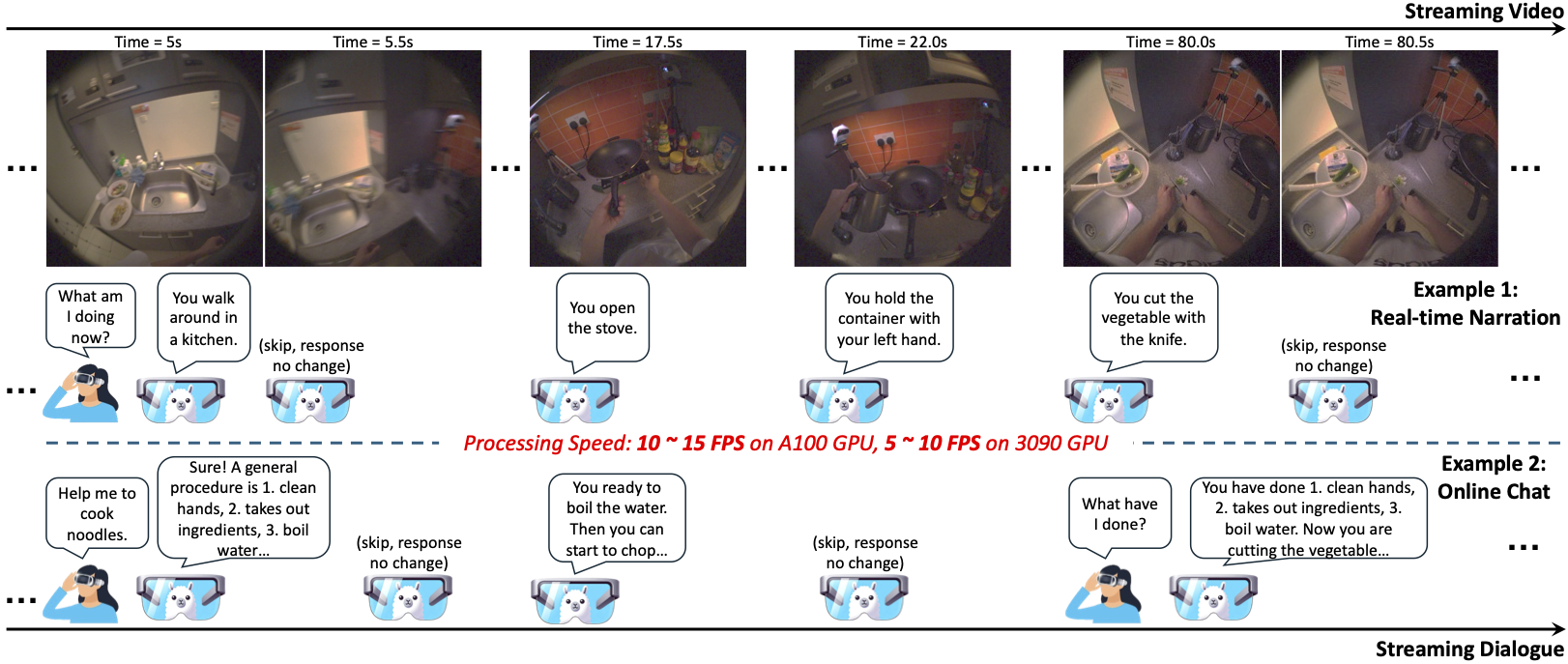

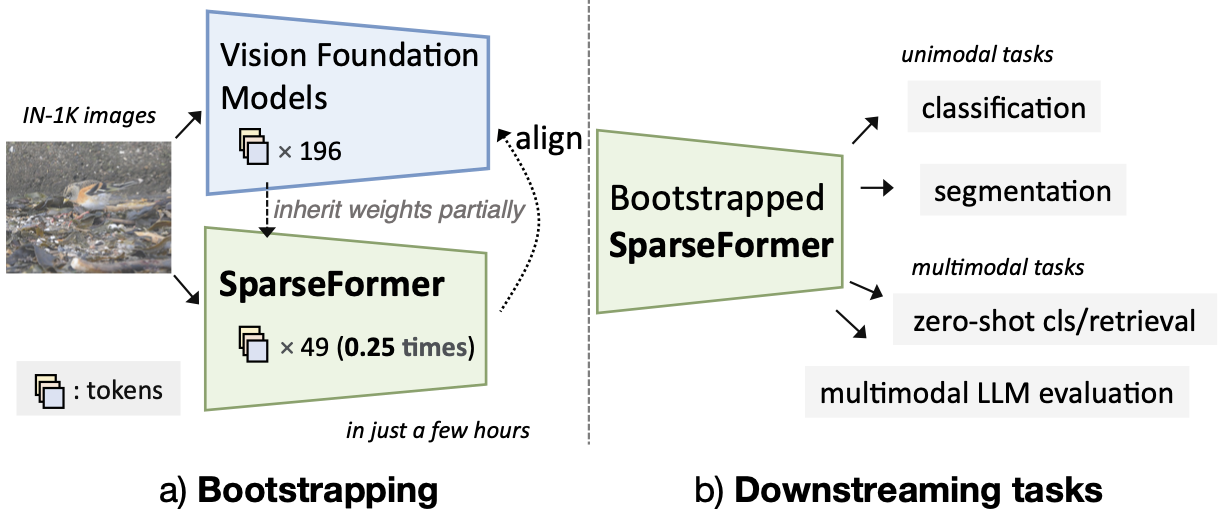

- 2024 Feb: VideoLLM-online, SparseFormer got accepted by CVPR 2024.

- 2023 Sept: VisorGPT got accepted by NeurIPS 2023.

- 2023 Aug: EgoVLP received PREMIA Best Student Paper Award (Gold award).

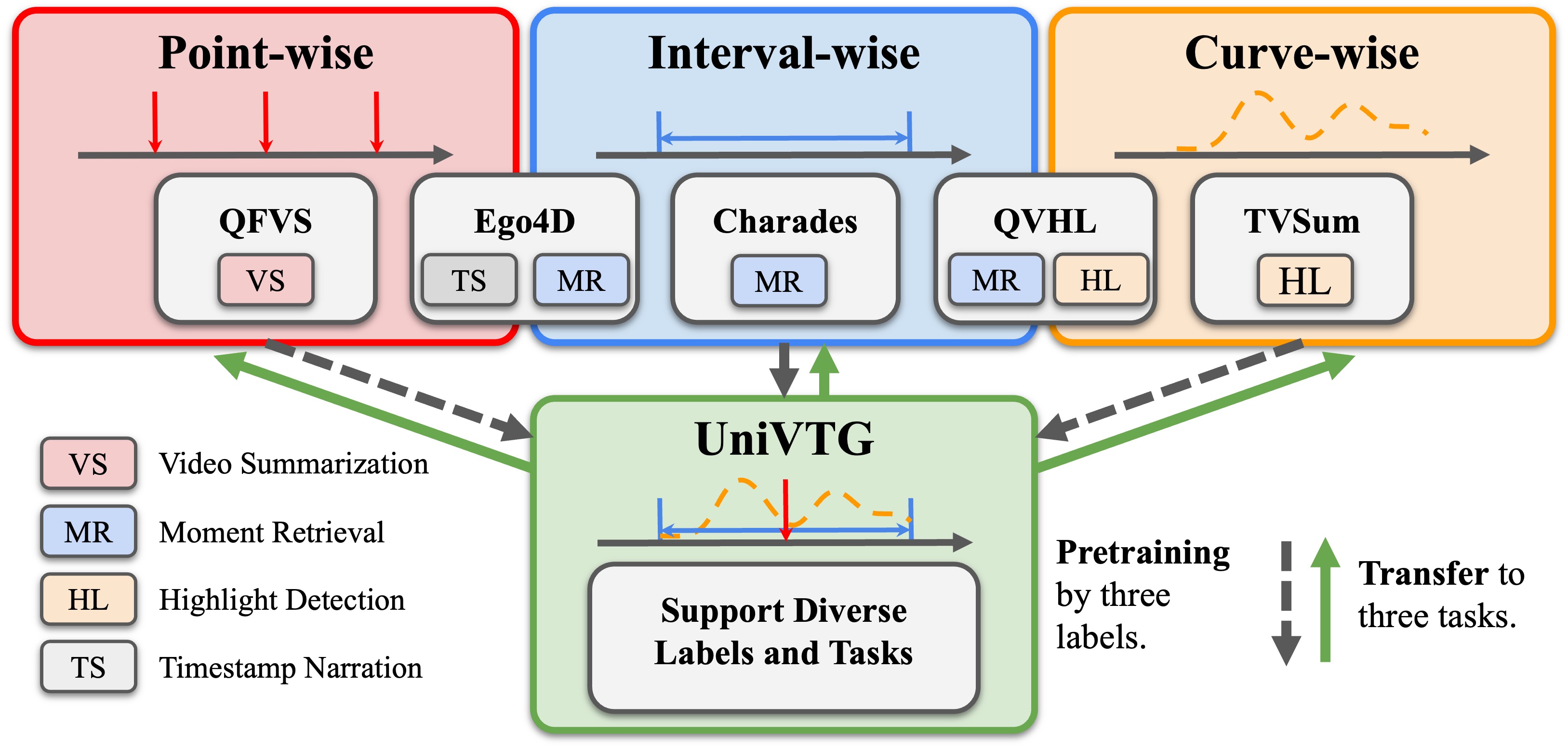

- 2023 July: UniVTG, EgoVLPv2, TL;DR got accepted by ICCV 2023.

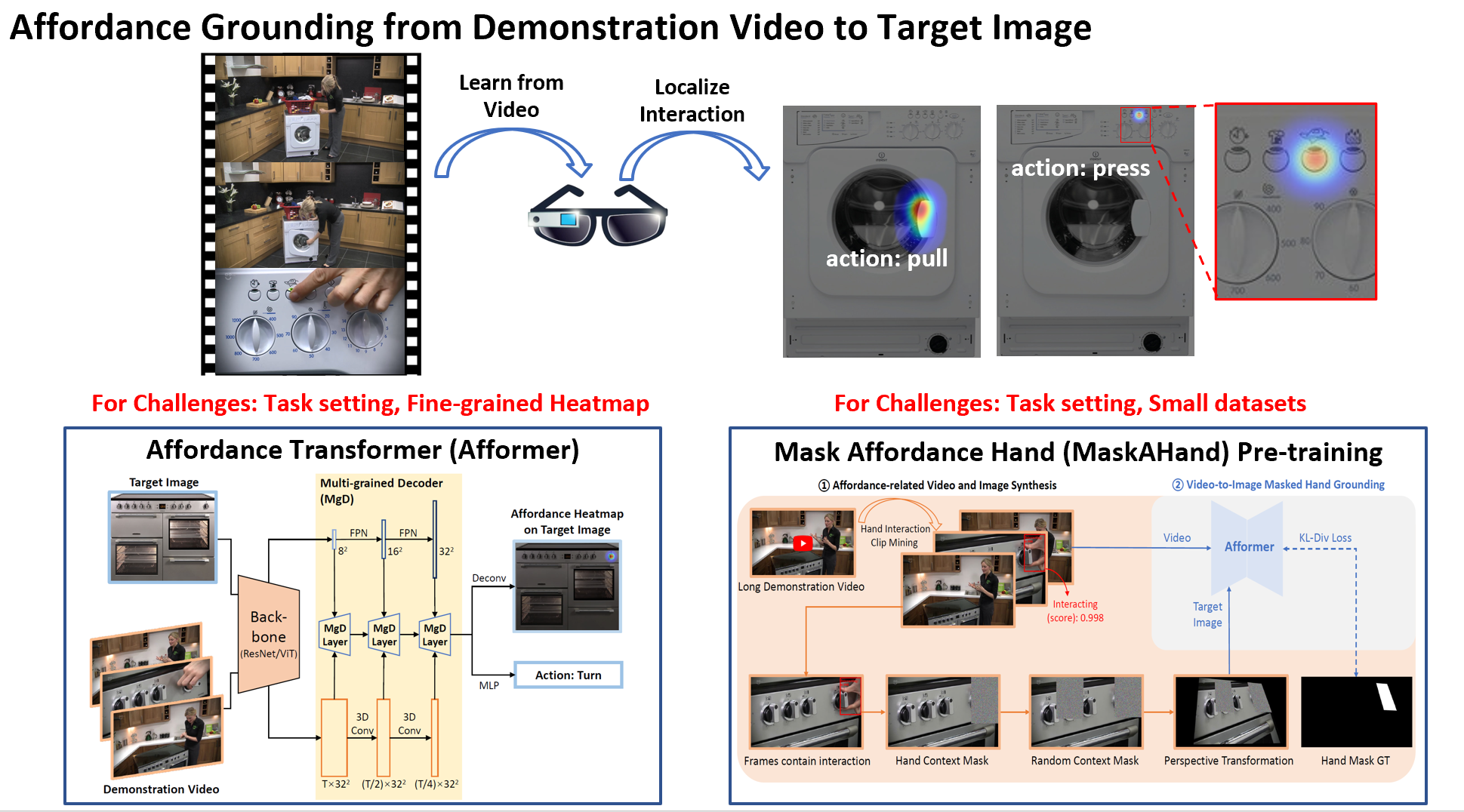

- 2023 Mar: All-in-one, Afformer got accepted by CVPR 2023.

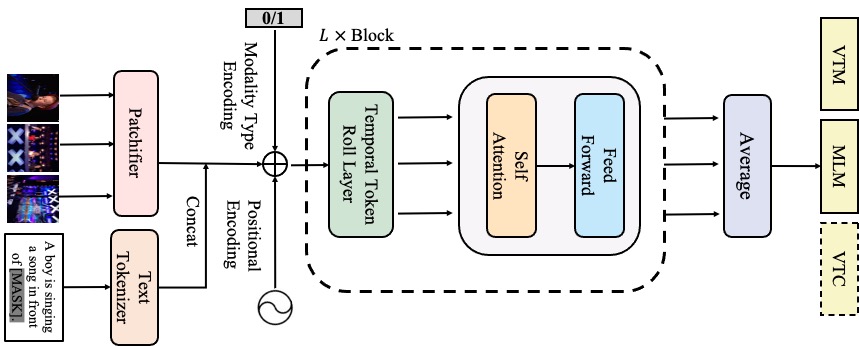

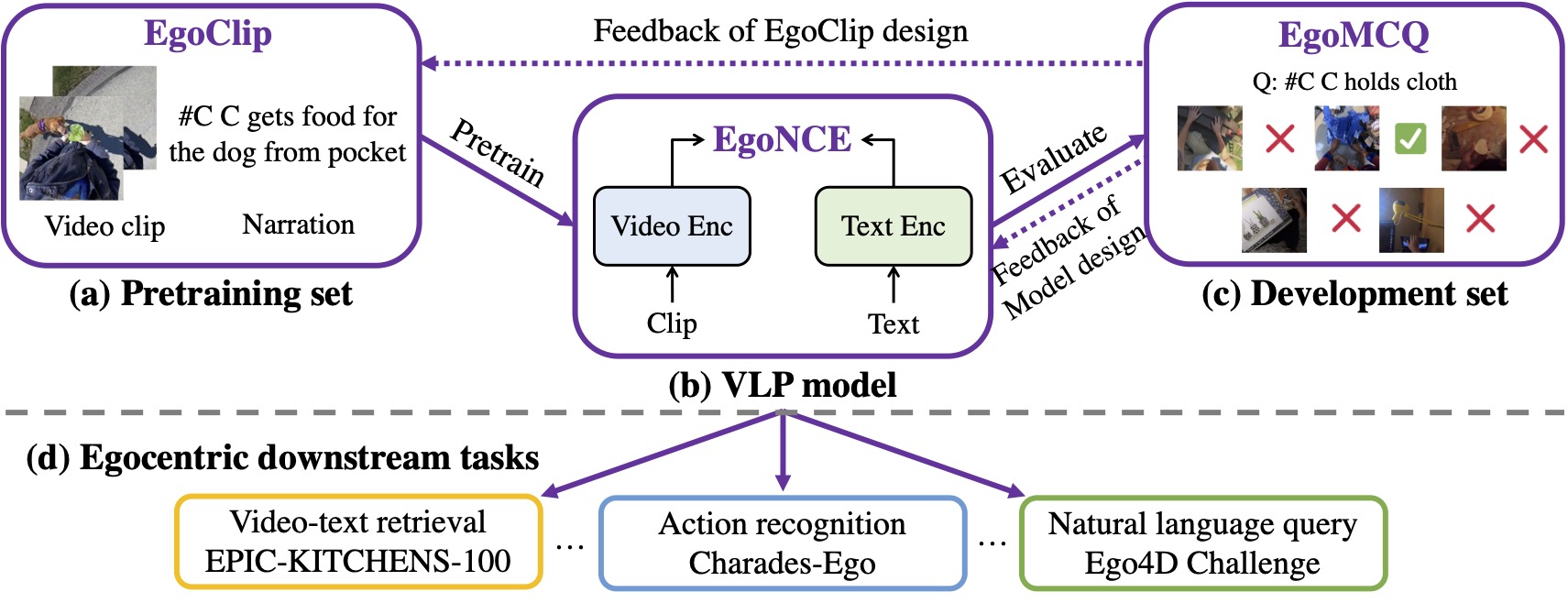

- 2022 Sept: EgoVLP got accepted by NeurIPS 2022 as Spotlight.

- 2022 Aug: Joined Show Lab @ NUS to start my Ph.D. journey!

- 2022 Jun: EgoVLP won Double Champions of Joint 1st Ego4D and 10th EPIC Workshop, CVPR 2022. [News]

.

. Preprints

|

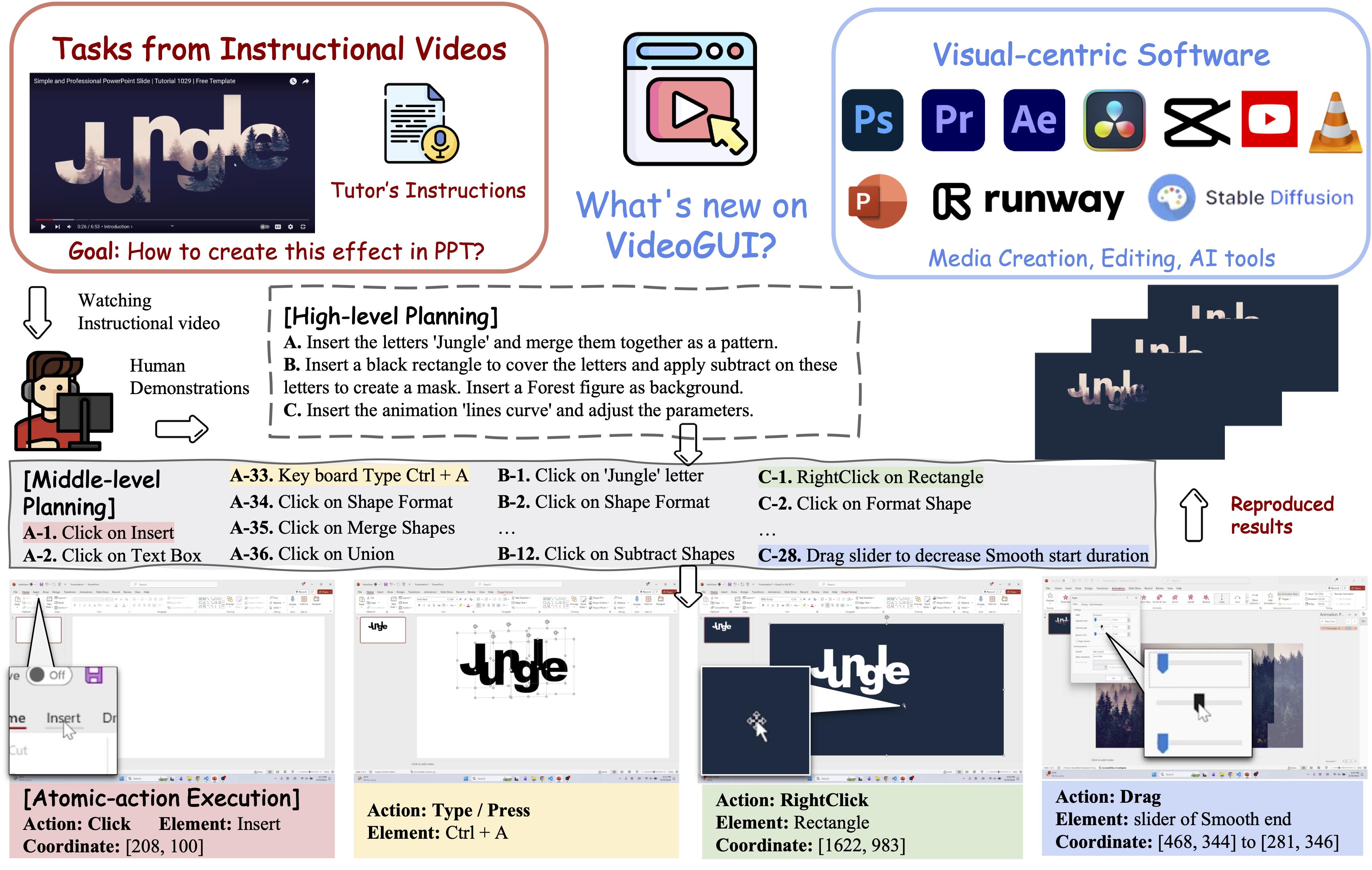

VideoGUI: A Benchmark for GUI Automation from Instructional Videos Kevin QH. Lin, Linjie Li, Difei Gao, Qinchen Wu, Mingyi Yan, Zhengyuan Yang, Lijuan Wang, Mike Z. Shou.

Preprint, 2024 |

|

CosMo: Contrastive Streamlined Multimodal Model With Interleaved Pre-Training Alex JP. Wang, Linjie Li, Kevin QH. Lin, Jianfeng Wang, Kevin Lin, Zhengyuan Yang, Lijuan Wang and Mike Z. Shou.

Preprint, 2023 |

|

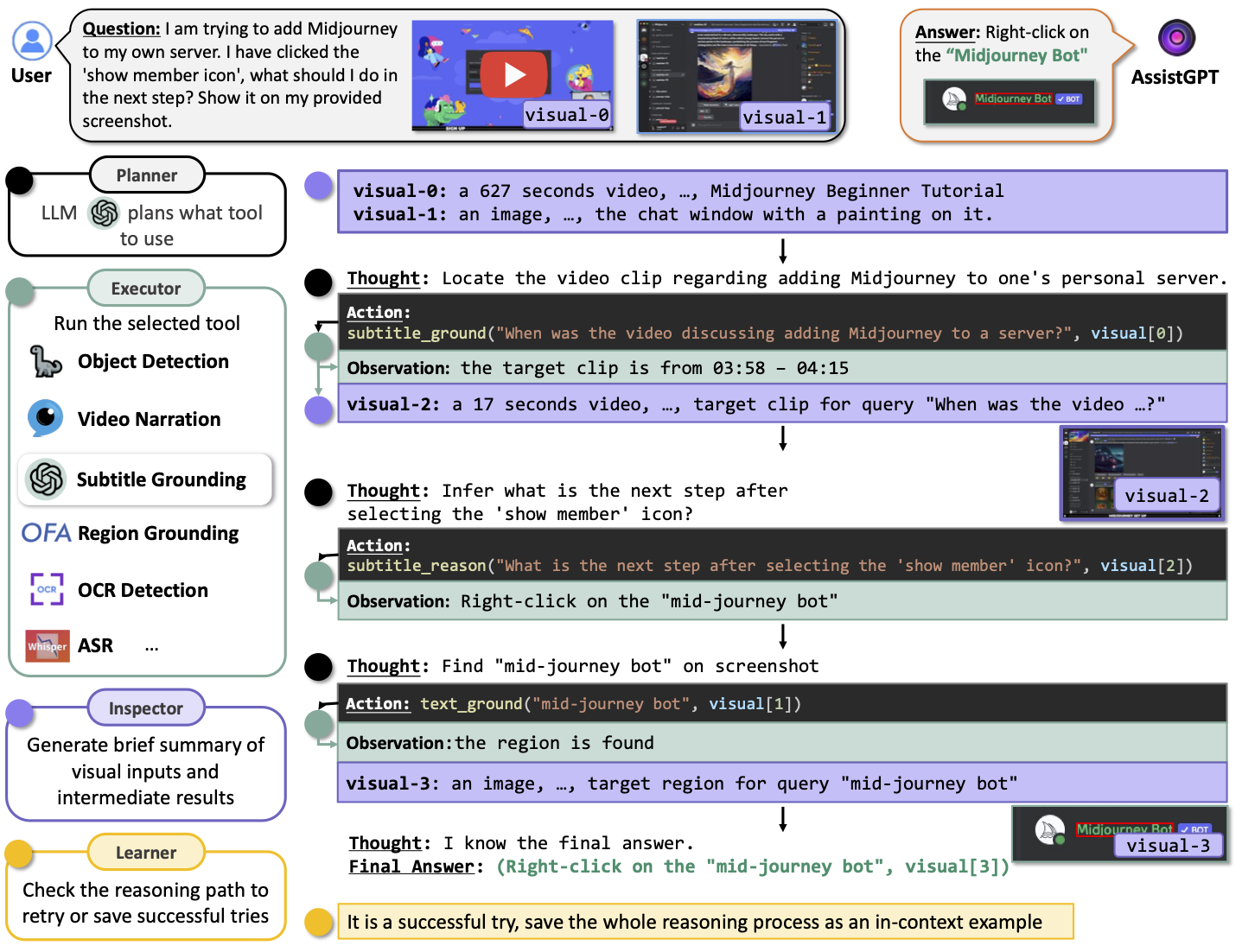

AssistGPT: A General Multi-modal Assistant that can Plan, Execute, Inspect, and Learn Difei Gao, Lei Ji, Luowei Zhou, Kevin QH. Lin, Joya Chen, Zihan Fan, Mike Z. Shou.

Preprint, 2023 |

Publications

Projects

|

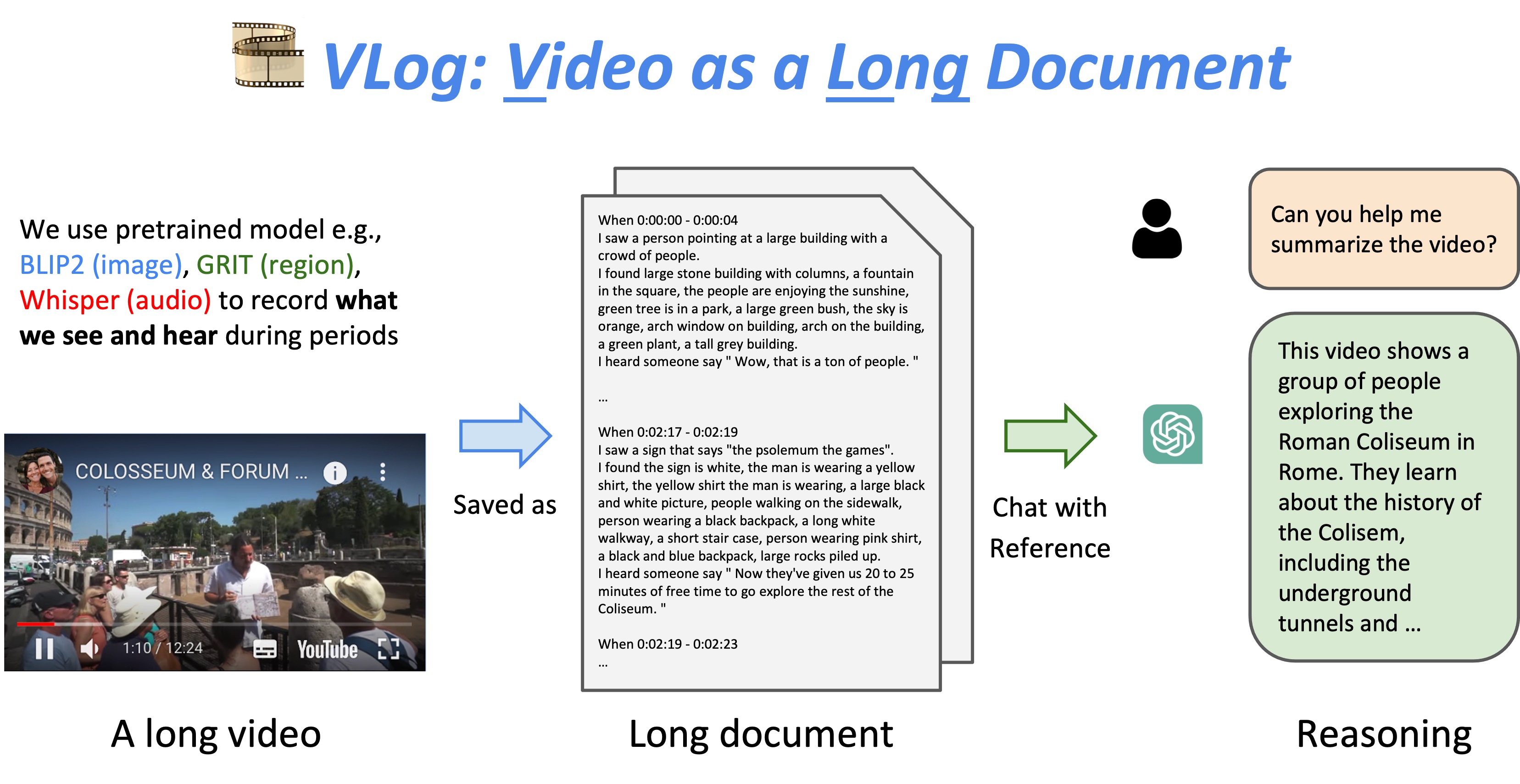

VLog: Video as a Long Document

[demo]

[code]

[twitter]

|

Honors

-

Egocentric Vision (EgoVis) Distinguished Paper Award2024

-

CVPR Outstanding Reviewers2024

-

PREMIA Best Student Paper Awards, Gold Award2023

-

Show Lab Annual Award2022

-

NeurIPS Scholar Award2022

-

Tencent Rhino-Bird Research Scholarship, Second Prize2022

-

1st Place on Ego4D - Object State Change Classification Challenge, CVPR2022

-

1st Place on EPIC-Kitchens - Multi-Instance Retrieval

Challenge, CVPR2022

-

China National Scholarship2018, 2021

Service

-

Conference Reviewer: CVPR, ICCV, ECCV, ICML, NeurIPS, KDD, EMNLP, AAAI, IJCAI, ICME, ICASSP, ACM MM.

-

Journal Reviewer: TPAMI, IJCV, TNNLS, TMM, Neurocomputing, Pattern Recognition, etc.

-

Co-organizer of The AI Talks.